|

Shown below and click on the Continue button. Now it’s time to register Redshift as a linked service, which in our case is the data source. Provide appropriate details and click on Next. In the first step of the wizard, we need to provide basic details like the name of the pipeline, description as wellĪs the execution frequency. From the portal, click on theĬopy data icon to start the data wizard which would help us to build the desired data pipeline. Once created, it would look as shown below.Ĭlick on the Author & Monitor link which would open the portal as shown below. Click on Create Newīutton and create a new instance. Click on it and it would navigate you to the Azure Data Factory dashboard page. Navigate to All Services, from the Databases menu, you would find the Azure Dataįactory item. The first step is to set up an Azure Data Factory instance, which is going to be the data movement vehicle for theĭata population. Populating data into the database from AWS Redshift. Once these pre-requisites are in place, we are ready to focus on the method of We need to have an Azure SQL Server instanceĪnd a database hosted on it.

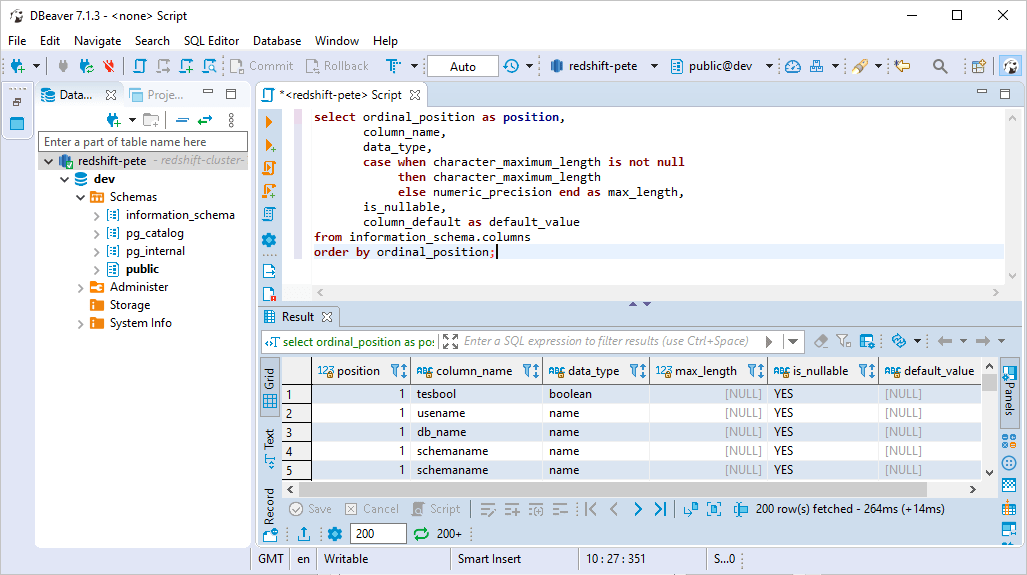

The destination for our scenario is going to be the Azure SQL database. This is the dataset that we aim to load into the SQL database on Azure. We just need at least one database object andįor demonstration purposes, I have created a simple table named test with just a few records in it as shown below.

The volume of data or schema of the database object is not important.

it would mean that the source system with data is in place. Logging on to the cluster using the query editor window. One of the simplest ways to add data is by Once the cluster is created, we need to add some sample data to it. Properties page as we would need it while creating the pipeline in Azure Data Factory. Take a note of the cluster endpoint from the On the name of the cluster, to view the properties page of the cluster. This will allow traffic from your client machine over the open internet to the AWSĪfter creating the cluster, you should be able to see an entry as shown below on the Clusters dashboard page. After changing this setting, you also need to add the IP of your client machine to the security group in which You intend to access the cluster over open internet temporarily, you change the Publicly Accessible setting value to Provide the name of the cluster, node type, number of nodes, as well as master credentials to create the cluster. Click on the Create Cluster button to open the cluster creation wizard as shown below. Log on to the AWS Account and search for AWS Redshift and click on the search results link. It’s assumed that you have an AWS account with the required privileges to create the Redshift cluster. As Redshift is the data source, let’s start with creating a Redshift cluster. As a pre-requisite to start this exercise, we need the source and destination systems in place. In this exercise, our aim is to import data from Amazon Redshift to Azure SQL Database. Let’s see how we can import data into the database on Azure from AWS Redshift in this article. So, it’s very probable that clients would have data on the Redshift, as well as Azure SQL databases in a multi-cloud scenario. AWS Redshift is a very popular and one of the pioneering columnar data warehouses on the cloud, which has been used by clients for many years.

In the era of cloud, most organizations have a multi-cloud footprint, and often there is a need to correlate data from different clouds for various purposes, for example, reporting. In this article, we will learn an approach to source data from AWS Redshift and populate it in the Azure SQLĭatabase, where this data can be used with other data on the SQL Server for desired purposes.Īzure SQL database is one of the de-facto transactional repositories on Azure.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed